In 2024, OpenAI CEO Sam Altman posited a bold, futuristic vision: a lone entrepreneur, armed with the leverage of generative AI, would soon build a company valued at over one billion dollars. It was a forecast that captured the imagination of Silicon Valley. By early 2026, Los Angeles-based entrepreneur Matthew Gallagher claimed to have turned that prophecy into reality with his telehealth startup, MEDVi.

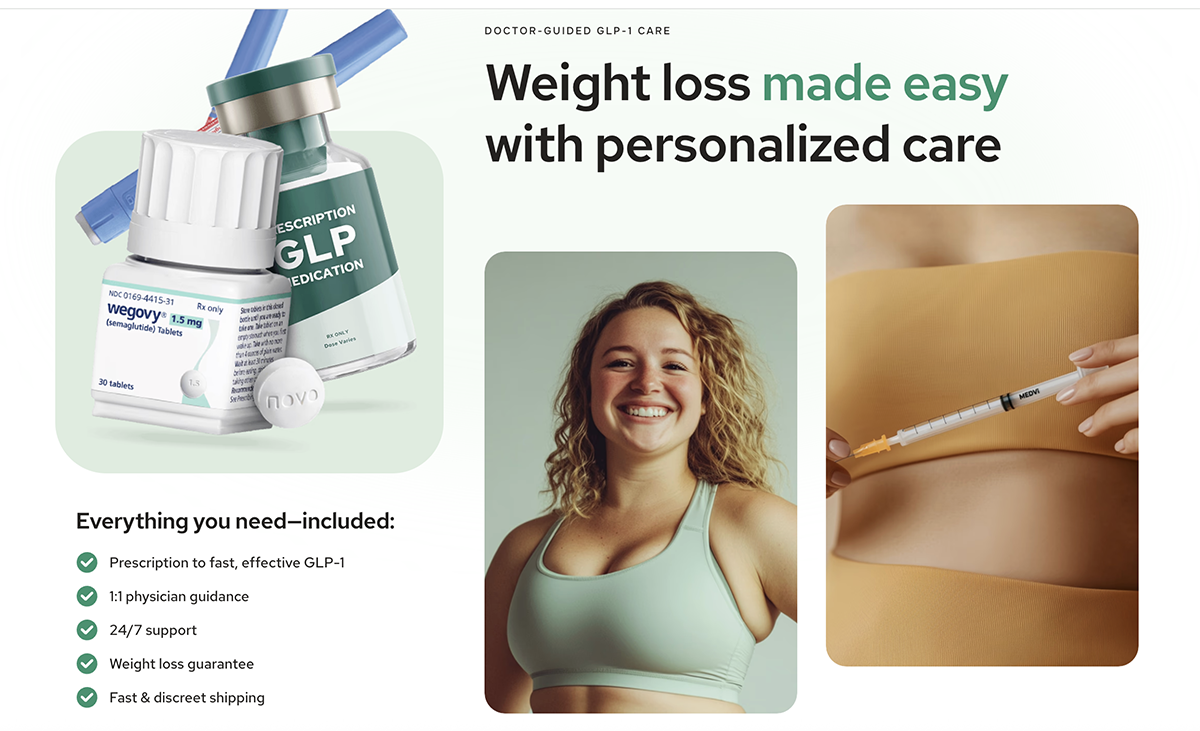

Marketed as the “fastest-growing company in history,” MEDVi promised a streamlined, AI-optimized path to obtaining weight-loss and lifestyle pharmaceuticals like GLP-1 agonists and erectile dysfunction medications. However, as the veneer of this "AI-first" success story is peeled back, a darker picture emerges—one of regulatory warnings, fabricated physician personas, questionable clinical efficacy, and a sprawling web of potential consumer fraud.

The Rise of an AI Unicorn

MEDVi’s narrative is one of extreme lean operations. Gallagher, along with his brother Elliot, claimed to have built a machine capable of generating $1.8 billion in projected 2026 revenue using only a handful of full-time staff. By leveraging generative AI tools like ChatGPT, Claude, and MidJourney, the company ostensibly automated everything from marketing copy to customer acquisition.

When The New York Times profiled the company in early April 2026, it served as a high-profile validation of the "one-person unicorn" thesis. The report, which noted that the Times had been granted access to internal financial documents, painted a picture of a company that had mastered the art of digital scale. Yet, the celebratory tone of the profile clashed violently with the reality of the company’s operations on the ground, which were already under scrutiny by federal authorities.

Chronology of a Regulatory Collision

The friction between MEDVi and federal regulators began long before the company hit the headlines as a success story.

- May 2025: Investigations by Futurism revealed that MEDVi was utilizing deepfaked before-and-after photos and AI-generated imagery of medical products. Furthermore, the site listed physicians who, when contacted, claimed to have no active affiliation with the company.

- December 2025: A coalition of 35 state attorneys general penned a letter to Meta, demanding action against the deluge of deceptive, AI-driven advertisements for weight-loss products that were flooding the platform—a category in which MEDVi was a prominent player.

- February 20, 2026: The FDA issued a formal warning letter to MEDVi, LLC. The agency explicitly cited the company for "misbranding" compounded drugs. The FDA took issue with the company’s marketing claims, which falsely suggested that its compounded versions of semaglutide and tirzepatide were FDA-approved, or that they were equivalent to brand-name drugs like Wegovy, Ozempic, and Mounjaro.

- March 20, 2026: A class-action lawsuit (James v. Medvi LLC) was filed in California, alleging the company benefited from massive amounts of affiliate spam.

- April 2, 2026: The New York Times publishes its profile of MEDVi, largely ignoring the February FDA warning letter.

- April 8, 2026: MEDVi issued a public statement attempting to distance itself from the FDA warning letter, claiming the site cited by the FDA belonged to an "affiliate marketing agency." However, the letter was addressed directly to the company at its Delaware office and used its official email domain.

The Anatomy of Deceptive Marketing

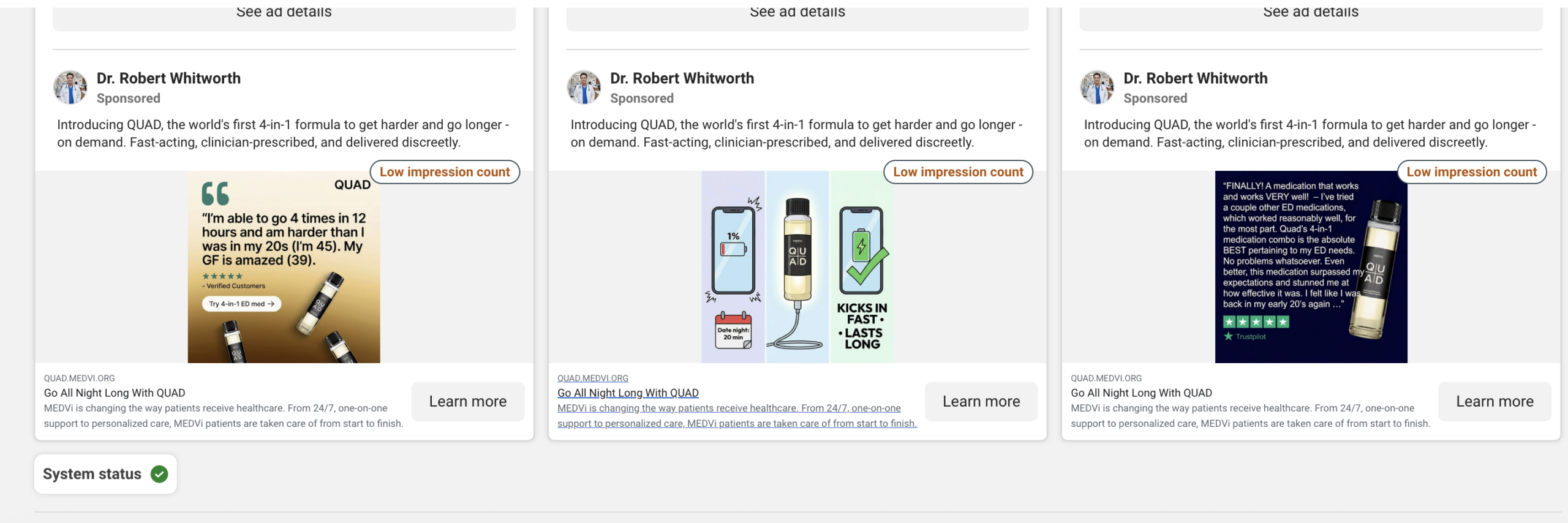

The core of the controversy lies in MEDVi’s reliance on what critics have termed "affiliate spam." A Drug Discovery & Development review of the company’s digital footprint revealed a chaotic ecosystem of fabricated identities.

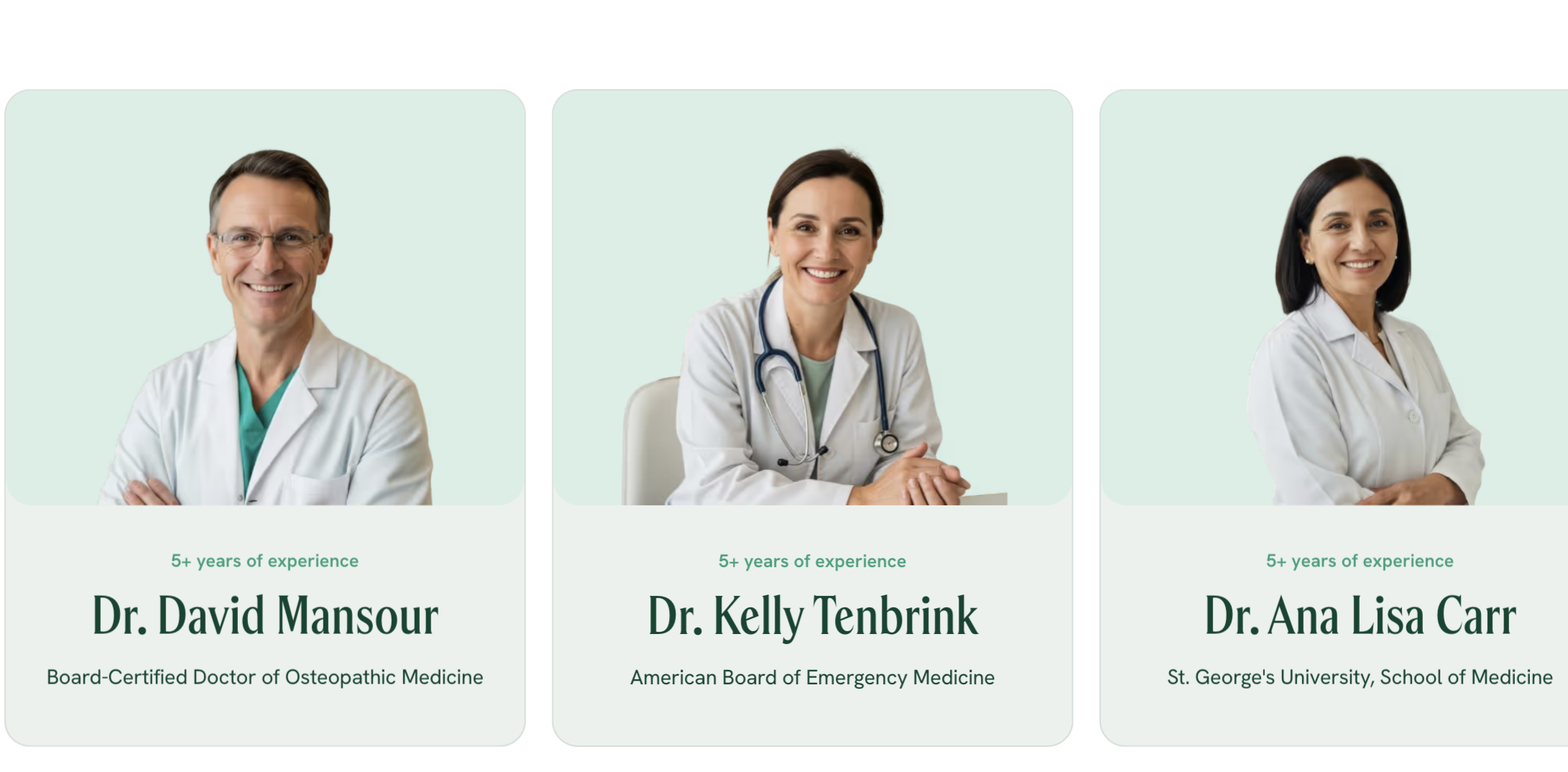

The Fabricated Physician Network

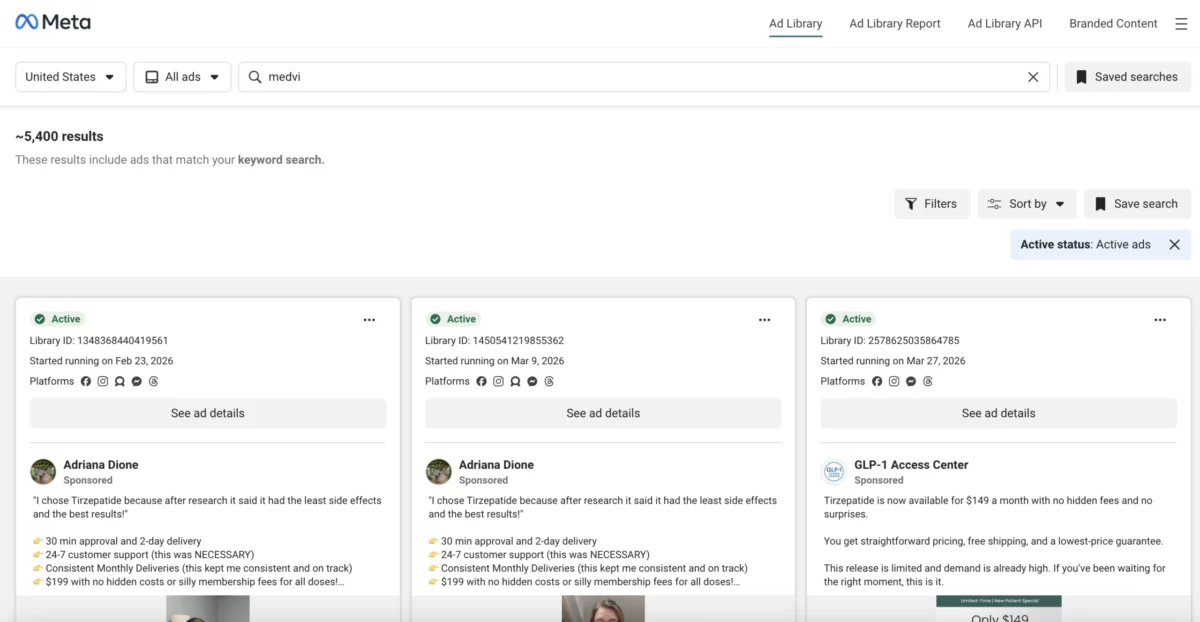

Meta’s Ad Library, which at one point hosted over 5,000 active advertisements for MEDVi, acted as a window into the company’s marketing tactics. These ads frequently featured "physicians" with impressive credentials who, upon closer inspection, appeared to be entirely fictional or misused.

One prominent Facebook page for "Dr. Robert Whitworth" promoted the company’s erectile dysfunction treatment while listing an address in Cameron, MT, that does not exist. Other personas, such as "Professor Albust Dongledore" and "Dr. Richard Hörzgock," appeared in video testimonials that recycled identical scripts across multiple pages. Even when real doctors were linked to the platform, their associations were often tenuous or misrepresented. For instance, Drs. Ana Lisa Carr and Kelly Tenbrink were listed on the site under credentials that did not align with their actual board certifications according to Florida health records. Both were scrubbed from the site immediately following inquiries from investigators.

AI-Generated Personas and Deepfakes

The fine print on the MEDVi website eventually conceded that "individuals appearing in advertisements may be actors or AI portraying doctors." This disclaimer, however, came only after months of criticism regarding the use of "deepfaked" weight-loss transformations—photos that were later traced to years-old stock imagery with faces swapped via AI.

Regulatory and Legal Implications

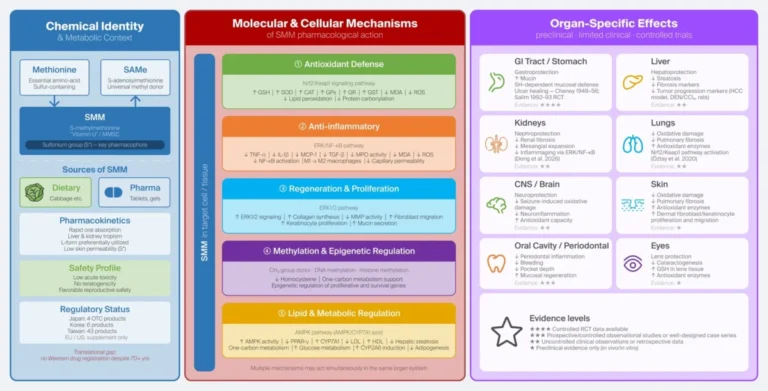

MEDVi’s business model is tethered to a narrowing regulatory window. The company relies on the sale of compounded GLP-1 medications—a practice permitted by the FDA primarily during drug shortages. However, the FDA declared the shortage of tirzepatide officially resolved in late 2024, followed by semaglutide in early 2025.

To circumvent this, MEDVi and similar startups have pivoted to "personalized" formulations, often adding substances like vitamin B-12 to the base drug to argue that the product is no longer "essentially a copy" of the FDA-approved version. The FDA, however, has signaled that this loophole is closing, warning that such modifications do not inherently bypass regulations unless a physician documents a "significant difference" for the patient.

The OpenLoop Health Connection

The legal risks extend beyond the FDA. MEDVi relies on third-party infrastructure providers like OpenLoop Health to manage the telehealth component of its business. OpenLoop is currently embroiled in its own legal battles following a massive data breach that exposed the sensitive medical information of roughly 1.6 million patients.

Furthermore, a class-action lawsuit filed in Delaware alleges that the network of telehealth sites operated by these entities is effectively selling "modern-day snake oil." The complaint centers on the efficacy of oral compounded GLP-1 tablets, which the plaintiffs argue have no demonstrated mechanism for absorption. Because tirzepatide is a large peptide molecule, it is typically destroyed by stomach acid before reaching the bloodstream—a physiological hurdle that the suit claims these "storefronts" ignore in their pursuit of profit.

Official Responses and the "Caveman" Defense

When confronted with evidence of misleading advertising and regulatory non-compliance, Matthew Gallagher has frequently adopted a combative stance on social media. Rather than addressing the specific allegations of consumer fraud, he has mocked his critics, characterizing them as "low energy" individuals who fail to grasp the transformative potential of white-label, AI-driven business models.

In a now-deleted post on X, he compared the criticism to "cavemen" struggling to understand the discovery of fire. He further equated the use of AI-generated doctors to standard marketing stock art, despite the obvious ethical and legal distinction between using a stock photo and creating a fake medical professional to dispense pharmaceutical advice.

Implications for the Future of Telehealth

The MEDVi saga serves as a cautionary tale for the intersection of generative AI and healthcare. While the technology offers undeniable efficiencies in operations and logistics, it also lowers the barrier to entry for bad actors to engage in large-scale deception.

The case highlights three critical areas for future oversight:

- Affiliate Accountability: The "affiliate-as-a-service" model allows companies to distance themselves from fraudulent advertising while still reaping the financial rewards. Regulatory bodies are now being urged to hold the primary beneficiary of such ads accountable for the behavior of their affiliates.

- Medical Credentialing: The ease with which sites can present AI-generated personas as board-certified physicians necessitates a more robust, blockchain-backed, or centralized verification system for telehealth platforms.

- Clinical Oversight: The reliance on "telehealth-in-a-box" platforms that operate with minimal human physician oversight presents a systemic risk to patient safety. As the case of the oral GLP-1 efficacy suggests, the shift toward "personalized" compounding may be outpacing the clinical science, putting patients at risk of taking ineffective or potentially harmful substances.

As regulators continue to investigate, the story of MEDVi is far from over. Whether it represents the future of entrepreneurship or a cautionary blueprint for the next wave of digital scams remains to be seen. What is clear, however, is that in the race to build the next billion-dollar company, the guardrails of ethics and consumer protection are becoming increasingly difficult to ignore.