The integration of artificial intelligence (AI) into the American healthcare system has transitioned from a futuristic concept to an operational reality. Across the country, insurers, providers, and patients are increasingly relying on machine learning and algorithmic tools to navigate the complex "claims review cycle"—the process of determining what care is covered, what it costs, and who pays for it. However, as these technologies reshape the relationship between patients and health plans, a significant policy clash is emerging between federal deregulation efforts and state-level consumer protection mandates.

The Trump administration’s recently unveiled National Policy Framework for Artificial Intelligence (the "AI Framework") signals a paradigm shift. By advocating for federal preemption of "cumbersome" state laws, the administration aims to accelerate AI deployment by removing regulatory hurdles. This approach stands in stark contrast to the previous administration’s focus on establishing robust federal safeguards to protect patients from algorithmic bias and privacy breaches. As Congress prepares to debate the future of AI legislation, the healthcare industry sits at a crossroads: how to balance the promise of unprecedented administrative efficiency against the risks of biased denials, compromised privacy, and eroded patient rights.

The Claims Review Cycle: Where AI Meets Medicine

To understand the stakes, one must look at how AI is currently being deployed across the healthcare ecosystem. Prior authorization—the requirement that a patient obtain approval before receiving a treatment—and retrospective claims review represent the primary friction points in medical billing.

Insurers and TPAs

Health insurers and Third-Party Administrators (TPAs) manage millions of claims annually. AI has become a primary tool for increasing speed and accuracy. According to a recent National Association of Insurance Commissioners (NAIC) survey of 93 insurers, 84% are currently utilizing AI or machine learning for utilization management and prior authorization. These algorithms process vast datasets to predict coverage outcomes, theoretically reducing the time a patient waits for a procedure to be approved.

Providers and Revenue Cycle Management

On the other side of the ledger, healthcare providers are adopting AI to maximize reimbursement. "Revenue Cycle Management" (RCM) tools now utilize generative AI to automate medical coding and draft appeal letters. Ambient scribe technology, which documents patient encounters in real-time, is also being used to populate electronic health records (EHRs) in ways that optimize billing codes. While this reduces the administrative burden on doctors, it also introduces the risk of "upcoding" or inflating claims.

Patients and the Rise of AI Advocacy

Patients, often overwhelmed by the complexity of insurance denials, are increasingly turning to AI-powered apps to generate appeal letters. By feeding clinical notes and plan documents into large language models, patients can now challenge denials with unprecedented speed. However, this also raises significant security concerns regarding the data shared with third-party tech developers.

Chronology: The Evolution of AI Oversight

The current policy landscape is the result of rapid technological adoption outpacing legislative action.

- 2023–2024: Increased scrutiny on AI in healthcare leads to the NAIC adopting a "Model Bulletin" for insurance regulators, which establishes expectations for insurers using AI in claims processing.

- January 2025: The incoming Trump administration rescinds a comprehensive Biden-era Executive Order that had sought to establish federal standards for "safe and responsible" AI use in healthcare.

- July 2025: The White House releases an AI Action Plan, threatening to withhold federal funding from states that implement "burdensome" AI regulations.

- December 2025: An Executive Order is issued, establishing a Department of Justice litigation task force specifically designed to challenge state-level AI laws that conflict with federal policy.

- March 2026: The Trump administration releases its formal National Policy Framework for Artificial Intelligence, proposing a national standard that would preempt specific state-level consumer protections.

Supporting Data and Risks: The Bias and Privacy Gap

The rapid deployment of AI is not without technical and ethical perils. The most significant concern involves the quality of data used to train these models.

The Problem of Biased Proxies

A landmark study highlighted that algorithms using historical health costs as a proxy for health needs often underestimate the requirements of Black patients. Because systemic inequities historically limit access to care for marginalized groups, an algorithm may interpret lower utilization as lower medical necessity, thereby perpetuating and automating existing health disparities.

The HIPAA "Privacy Gap"

While the Health Insurance Portability and Accountability Act (HIPAA) provides some protection for data held by insurers and providers, it does not apply to the burgeoning sector of third-party tech companies developing AI tools. As interoperability regulations force health systems to share data more freely via APIs, there is a mounting risk that patient data could be repurposed, sold, or used to train commercial models without explicit consent or robust security oversight.

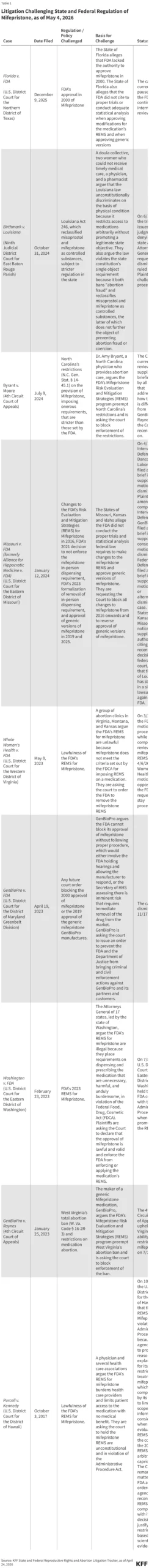

Litigation Trends

The judiciary is already seeing the fallout. Multiple class-action lawsuits are currently moving through the courts, challenging insurers for using "black-box" algorithms to deny claims without meaningful human review. Plaintiffs argue that these automated systems fail to account for unique clinical circumstances, violating the terms of their coverage.

Official Responses and the Push for Federalism

The Trump administration’s position is rooted in the belief that a "patchwork" of state regulations stifles innovation and hampers American competitiveness. By promoting an "industry-led" standard, the administration hopes to create a uniform environment for tech developers.

The Case for Preemption

The AI Framework argues that if every state sets its own standard for AI transparency or auditing, the compliance cost for developers will be prohibitive. The administration suggests that federal legislation should focus on high-level outcomes—protecting children and preventing fraud—while leaving the technical deployment of AI largely to the private sector.

The Defense of State Authority

State legislators, however, argue that they are the primary protectors of consumer health. With the federal government historically slow to act, many states have stepped into the void. State Attorneys General contend that they need the power to hold developers accountable when their models cause systemic harm. As seen in the Massachusetts Attorney General’s recent advisory, states are interpreting existing consumer protection laws to cover AI, creating a robust, if fragmented, shield for patients.

Implications: The Future of the Patient-Plan Relationship

The debate over AI in healthcare is essentially a debate over the future of the "fiduciary" duty of insurers. If a computer program denies a life-saving surgery, who is responsible?

The Erosion of "Full and Fair" Review

Under the Employee Retirement Income Security Act (ERISA), private employer plans are required to provide a "full and fair" review of all claims. As AI replaces human claims adjusters, the legal definition of "fairness" is under extreme pressure. If the Trump administration succeeds in preempting state-level AI oversight, the burden will shift entirely to federal agencies—like the Department of Labor or the FTC—to enforce these standards. Critics fear this will lead to a "deregulatory vacuum" where insurers are incentivized to prioritize algorithm-driven denial rates over patient outcomes.

The Interoperability Paradox

Federal efforts to improve data sharing (interoperability) are designed to help patients. Yet, these very APIs create the "pipes" through which sensitive medical data flows into the hands of AI developers. The tension between the desire for seamless, tech-enabled healthcare and the necessity of keeping private medical records private will be the defining challenge of the next decade.

Looking Ahead

As Congress weighs the administration’s AI Framework, the central question remains: will the legislative solution be one that empowers patients or one that protects developers? The outcome will determine whether AI serves as a tool for medical equity or an instrument of automated, inaccessible bureaucracy. For now, the legal battles in the courts—and the policy battles in statehouses—continue to serve as the only check on a technology that is moving faster than the law can follow. Patients, providers, and insurers remain in a state of high-stakes transition, waiting to see if federal policy will eventually codify the protections they currently rely on, or dismantle them in the name of innovation.