The global landscape of dermatology is facing a critical turning point. As skin cancer rates continue their alarming climb—with Australia, the United States, and Mexico reporting significant spikes in incidence—healthcare systems are struggling to bridge the gap between an overwhelming caseload and the limited availability of specialized dermatologists.

A breakthrough in Computer-Aided Diagnosis (CAD) has emerged from recent research, introducing a "Frugal AI" framework that promises to assist clinicians in the early identification of high-risk lesions. By combining the precision of Multi-Scale Gabor Filter Banks (MS-GFBs) with the adaptive power of Differential Evolution (DE), this new method offers a transparent, computationally efficient, and highly accurate alternative to traditional diagnostic tools.

The Growing Public Health Burden of Melanoma

Skin cancer is now one of the most prevalent malignancies globally. In Australia, recent epidemiological data indicates that more than 18,000 new cases are diagnosed annually, a 25% increase over the last decade. Similarly, in the United States, nearly 100,000 new cases appear every year, with over 7,900 deaths. Mexico has also felt the impact, with skin cancer now ranking as the fifth leading cause of hospital morbidity.

The most dangerous form, melanoma, is responsible for approximately 75% of all skin cancer-related deaths. The Skin Cancer Foundation notes that time is of the essence; patients with stage 1 localized tumors must receive treatment within 30 days of biopsy. A delay of even a few weeks can increase the mortality risk by 5%, while longer delays can escalate that risk by up to 41%.

Given these stakes, the development of CAD tools that can prioritize urgent cases is no longer a luxury—it is a necessity for public health.

The Evolution of Diagnostic Technology

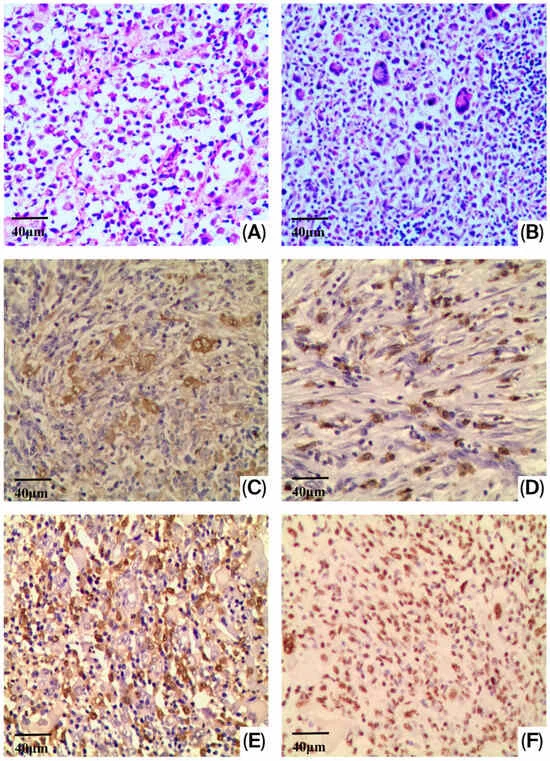

Traditionally, clinicians have relied on the "ABCD" rule: Asymmetry, Border irregularity, Color variation, and structural Diversity. While this rule is the gold standard for visual inspection, translating it into automated code has proven difficult. Most current computational models focus on global statistics that often overlook the directional organization of lesion heterogeneity—a hallmark of malignancy.

The Shift Toward Frugal AI

In the current diagnostic market, Deep Learning (DL) models—specifically Convolutional Neural Networks (CNNs)—have dominated the conversation. However, these models often function as "black boxes," lacking the interpretability required for clinical trust. Furthermore, they are notoriously resource-intensive, requiring massive, well-annotated datasets that are rarely available in public or resource-constrained healthcare systems.

The newly proposed framework, termed the Evolutionary Gabor-based Melanoma Descriptor (Evo-GMD), challenges this trend. By adopting a "Frugal AI" perspective, the researchers have developed a system that learns complex feature representations without the need for massive datasets, data augmentation, or expensive pre-training.

Chronology of the Research and Methodology

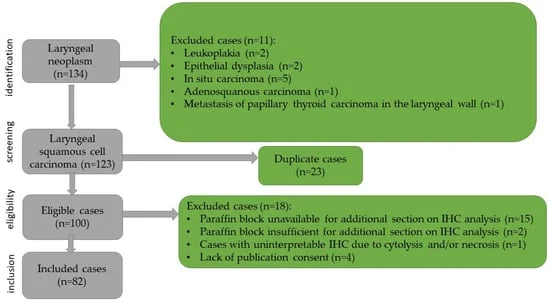

The development of the Evo-GMD framework followed a rigorous, two-stage experimental design to ensure reliability and avoid the "overfitting" traps that often plague AI medical research.

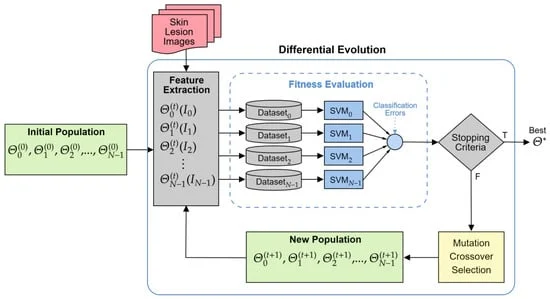

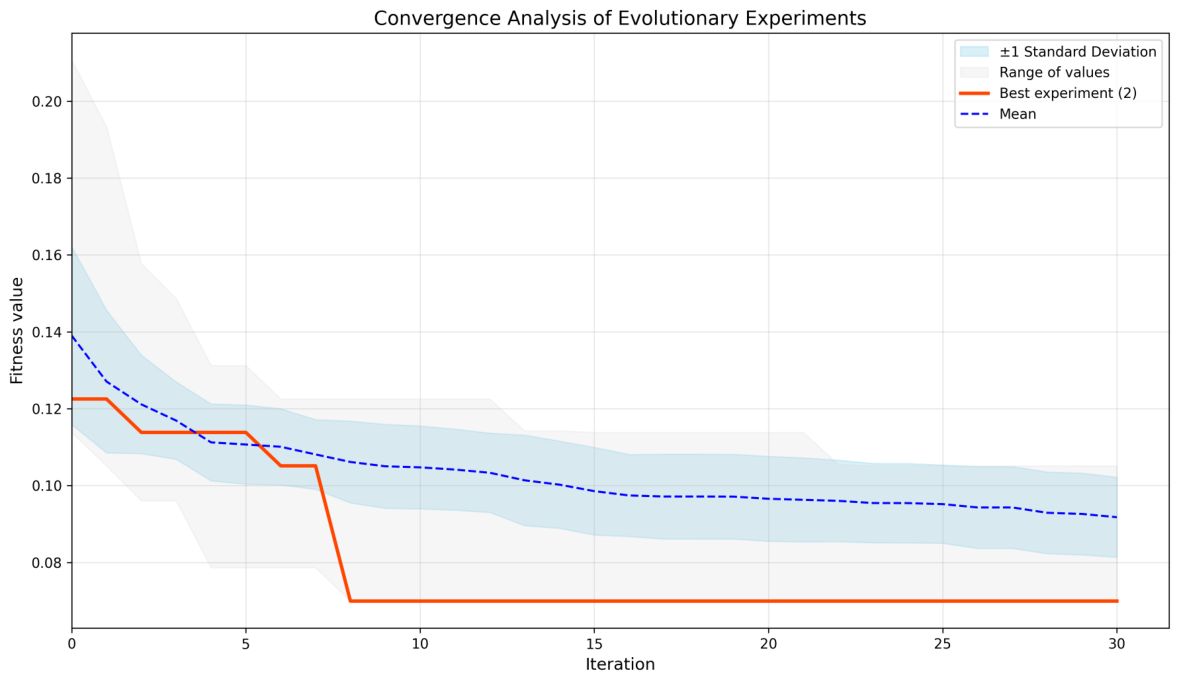

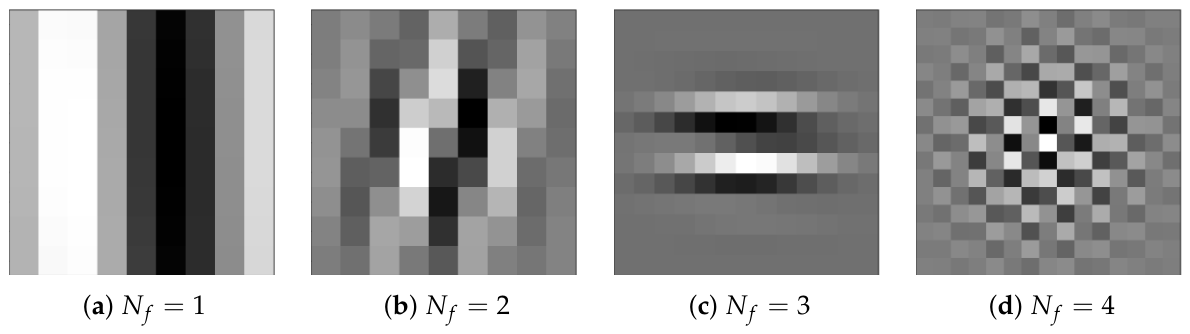

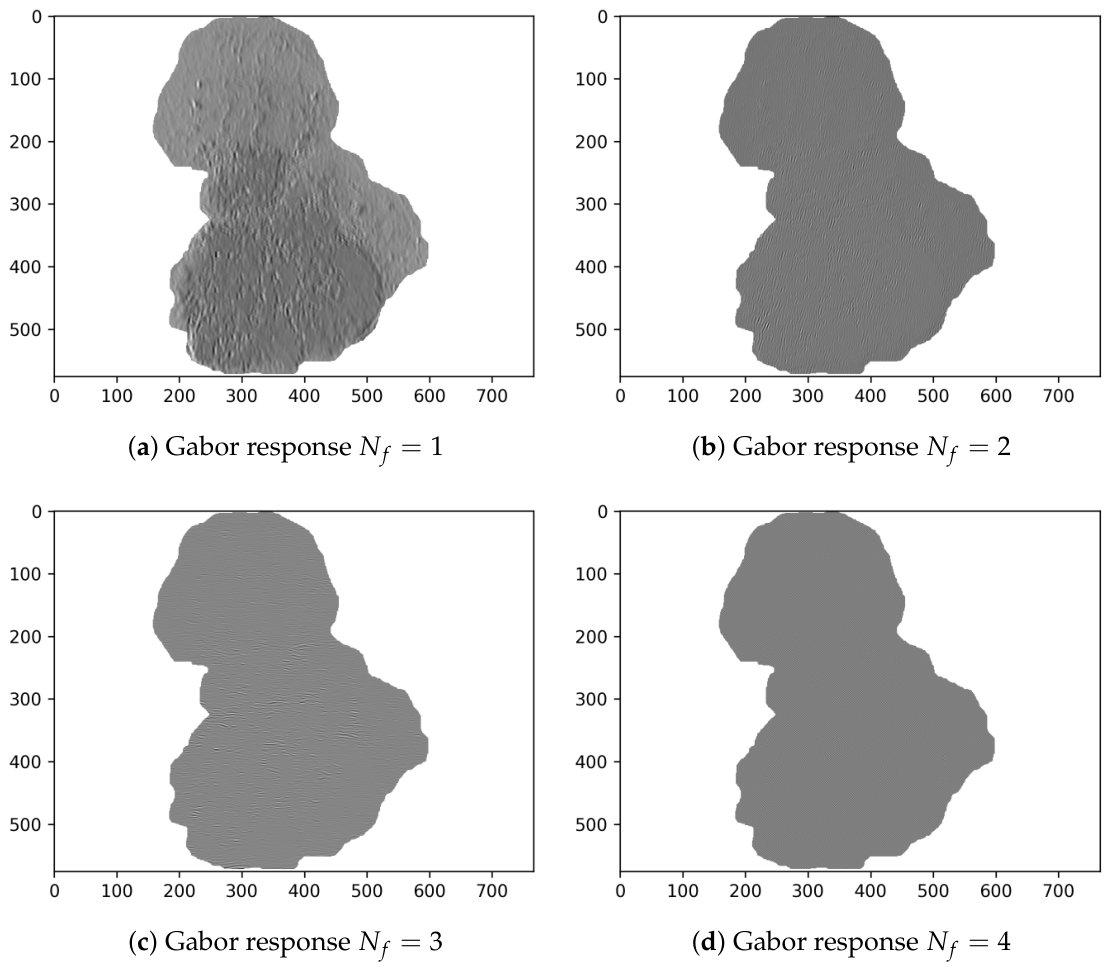

- Stage 1: Evolutionary Optimization. Using the PH2 dataset, the team utilized Differential Evolution to optimize the parameters of Gabor filters. By treating the filter bank as a population of potential solutions, the algorithm iteratively refined the frequency, orientation, and scale of the filters. This was done using a five-fold cross-validation scheme to ensure the resulting descriptor was stable.

- Stage 2: Validation on Independent Data. Once the optimal descriptor was identified, it was tested on a previously unseen portion of the dataset. The goal was to assess its real-world generalization.

The results were striking. The proposed Evo-GMD outperformed traditional texture-based methods (such as Haralick and LBP) and even surpassed complex CNN architectures in several key performance metrics, achieving an accuracy of up to 95%.

Supporting Data: Why "Frugal" Beats "Complex"

The study provides a comparative performance breakdown that challenges the current obsession with ever-larger models. When tested on the PH2 dataset, the median Evo-GMD achieved an accuracy of 0.9524 and an F1-score of 0.9231. In contrast, the baseline CNN reached 0.8750, and the sophisticated texture-based CNN failed to generalize, falling to an accuracy of 0.3333.

The researchers also conducted stress tests using the ISIC 2017 dataset, which contains significantly more noise, artifacts, and clinical heterogeneity. While all models, including the proposed one, faced performance degradation, the Evo-GMD maintained consistency where other models collapsed into "majority class bias"—a common failure where an AI simply predicts the most frequent class to minimize error.

Implications for Future Clinical Practice

The implications of this research are profound for the future of dermatological diagnostics:

- Interpretability: Unlike deep learning models, where internal weights are often impossible to decipher, the Evo-GMD allows clinicians to see exactly how the Gabor filters are analyzing the lesion’s texture. This transparency is crucial for medical adoption.

- Accessibility: Because this framework does not require the massive computational power or the enormous labeled datasets of standard deep learning, it can be deployed in rural clinics or resource-constrained hospitals where high-end GPU infrastructure is unavailable.

- Speed: By focusing on efficient texture analysis rather than brute-force pattern matching, the system is capable of providing rapid, actionable insights, potentially helping clinicians prioritize urgent melanoma cases within the critical 30-day treatment window.

Conclusion and Future Outlook

The research team, comprised of experts from institutions including the National Institute of Technology of Mexico (TecNM), concludes that the future of AI in medicine does not necessarily lie in building larger, more complex black-box models. Instead, it lies in aligning model design with the specific clinical constraints of the field.

The Evo-GMD framework represents a significant step toward "explainable AI." By capturing the directional organization of lesion textures—the same patterns experienced dermatologists look for—this framework bridges the gap between human intuition and machine precision.

Future work will focus on expanding this approach to even more diverse datasets, incorporating color-sensitive fusion strategies, and continuing to refine the balance between high-dimensional feature extraction and computational efficiency. As the medical community continues to navigate the integration of AI, tools like the Evo-GMD offer a pathway toward safer, faster, and more equitable diagnostic care.